Symmetric games usually aren’t. Chess gives White a small but measurable edge; Go solves the problem with a komi; Connect Four is a first-player win from the opening square. Cell Division, we assumed, would be somewhere in that neighborhood. We were wrong about the magnitude. On the board sizes we ship, Player 1 wins self-mirror matches between identical AI copies up to 96.8% of the time. This post is about measuring that edge, why it’s so lopsided, and what we’re doing about it.

Why we expected an edge, and why we expected it small

In Cell Division, players alternate placing cells on a square board until every cell is claimed. Score is purely positional — one point per isolated cell, plus two points per active connection axis — and there is no rule-level compensation for going second. No komi, no pie rule, no tempo offset. The game engine is a 60-line alternating loop in ai/src/tournament/game_runner.py.

Our intuition: on even-sided boards (4×4, 6×6, 8×8), both players take exactly half the cells, so any first-player edge should come from tempo — claiming high-openness cells before the opponent can respond. On odd-sided boards (5×5, 7×7), there’s an extra effect on top: the cell count is odd, so Player 1 makes one more move than Player 2. On a 5×5 board that’s 13 moves versus 12; on 7×7 it’s 25 versus 24. The extra cell has to score something.

What we didn’t expect was for that extra cell to dominate the result on odd boards, or for the tempo effect alone to be large enough to turn Elite into a near-lock on 5×5 and 7×7.

The experiment: self-mirror

The cleanest way to measure first-player advantage is to pit an AI against itself. Any edge that comes back is a property of the board, not of one side being stronger. We do this for all five shipped tiers — Easy, Medium, Hard, Elite (linear), Elite (CNN) — on every board size from 4×4 to 8×8, and we run 500 games per cell.

The stochastic tiers (Easy is pure random, Medium is a softmax-sampling heuristic at temperature 1.5) produce variance naturally. The deterministic tiers (Hard and both Elites play argmax) would produce the same game 500 times without intervention, so for those we force the first two moves to be uniform-random and let the AI drive from move three onward. That gives us 500 distinct games per (tier, size) cell with their preferred policy exercised on a distribution of openings, not just one.

# ai/scripts/first_player_advantage.py

for ai in players:

for size in [4, 5, 6, 7, 8]:

for _ in range(500):

if ai.name in DETERMINISTIC_TIERS:

r = play_randomized_opening(ai, ai, size, rng)

else:

r = play_game(ai, ai, size)The numbers

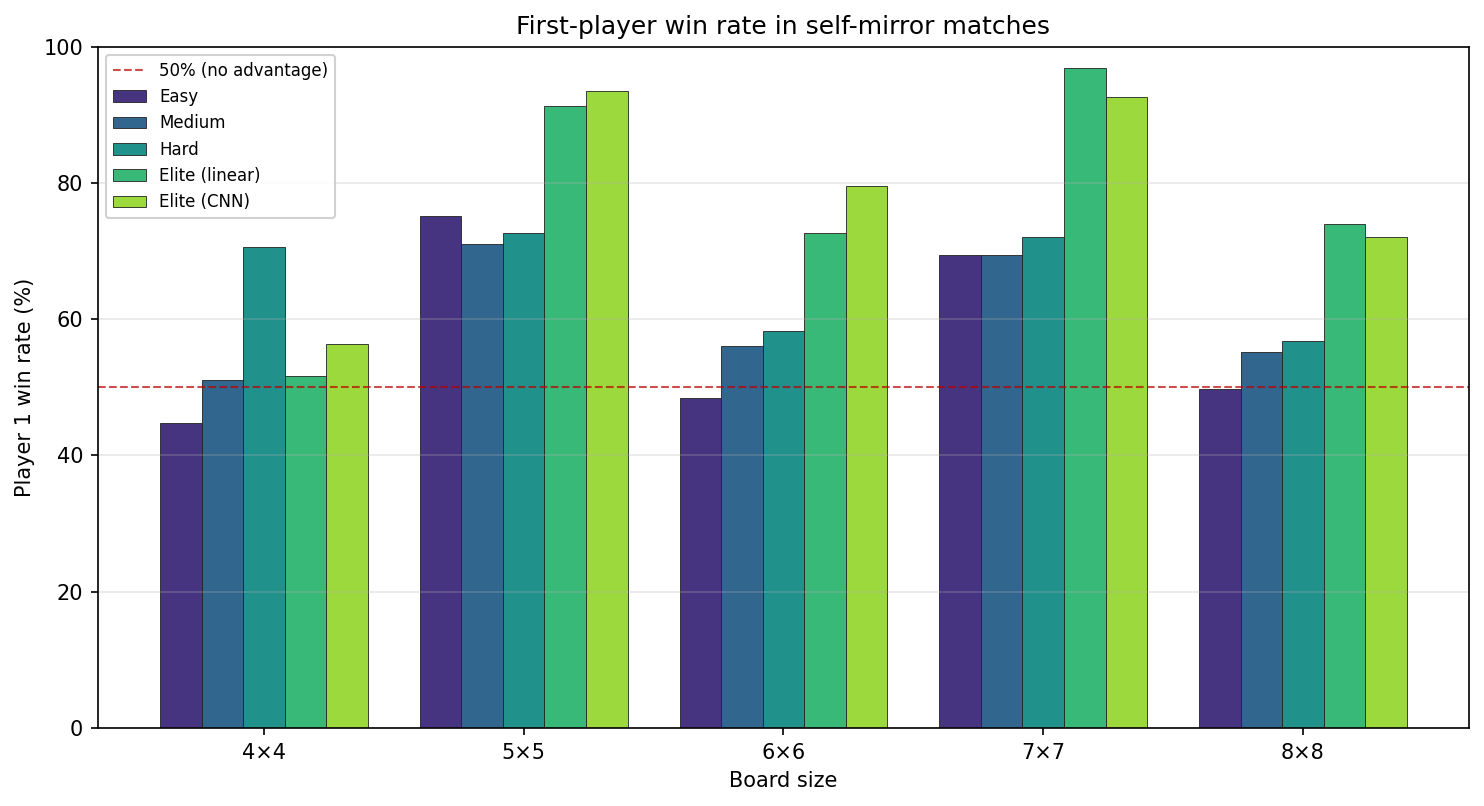

Here is the headline chart — P1 win percentage in self-mirror matches, grouped by board size, colored by tier. The dashed red line is 50% (no advantage).

The exact table (P1 win %, 500 games per cell):

4x4 5x5 6x6 7x7 8x8

Easy 44.8 75.2 48.4 69.4 49.8

Medium 51.0 71.0 56.0 69.4 55.2

Hard 70.6 72.6 58.2 72.0 56.8

Elite (linear) 51.6 91.2 72.6 96.8 74.0

Elite (CNN) 56.4 93.4 79.6 92.6 72.0Every even-board column hovers within about ten points of 50%. Every odd-board column jumps. On 7×7, Elite (linear) beats a copy of itself 96.8% of the time as P1 — that is not a game, that is a coin flip on move zero.

The parity effect is the whole story

Read the chart by row, not by column. Hard’s 5×5 number (72.6%) and its 7×7 number (72.0%) are both much higher than its 4×4 (70.6% — a 4×4 outlier we’ll get to), 6×6 (58.2%), and 8×8 (56.8%). Elite (linear) jumps from 74% on 8×8 to 96.8% on 7×7. The odd-board bars are visibly taller than the even-board bars for every tier.

That’s the parity effect in isolation: one extra move on odd boards translates into an enormous win-rate delta. It makes intuitive sense — if both sides evaluate openness roughly the same way, whichever side gets the extra turn is the side that gets to place the last valuable cell. But seeing it crest above 90% for the two strongest tiers was still a surprise.

The effect also scales with strength. Easy barely notices the parity gap because its moves are random — it doesn’t reliably convert a tempo advantage into score. Elite converts almost perfectly. That’s consistent with the tempo story: the edge exists for everyone, but only strong play cashes it in.

The 4×4 outlier

One oddity: on 4×4, Easy’s P1 win rate is 44.8% — significantly below 50% (Wilson 95% CI [40.4%, 49.2%], N=500). Random play on the smallest board actually favors Player 2 by about five points.

Our best guess is that on a 16-cell board, the eight moves per side are so few that blocking opportunities dominate. Player 2 always moves second, which means Player 2 always gets the last word on adjacency — the last cell Player 1 sees before the game ends is placed by Player 2. With random play, that last-word effect compounds slightly against P1. Strong play negates it (Hard on 4×4 hits 70.6% for P1, partly because it can also choose not to give P2 easy blocks). We didn’t pre-register a hypothesis here; it’s an artifact worth flagging, not a finding we’re confident about.

Cross-tier: going first can overturn skill

The self-mirror number is the pure parity signal. The more practically alarming number is how much going first matters across tiers — does being P1 let a weaker AI beat a stronger one? To measure this, for every ordered pair (A, B) and every board size, we played 50 games with A first, 50 with A second, and took the swing: A’s win rate going first minus A’s win rate going second.

Mean absolute swing, averaged across all 20 ordered pairs on the roster:

4x4: 16.3 pp

5x5: 16.7 pp

6x6: 9.3 pp

7x7: 10.0 pp

8x8: 5.4 ppSmall boards and odd boards both amplify the effect. The worst individual pair we found:

- 5×5, Elite (linear) vs Elite (CNN): Elite (linear) wins 100% as P1 and 0% as P2. Same two AIs, same board, same seed — the entire outcome flips on who goes first.

- 4×4, Elite (CNN) vs Hard: CNN wins 100% as P1, 8% as P2 — a 92-point swing.

- 8×8, Elite (CNN) vs Elite (linear): CNN wins 96% as P1, 32% as P2. Even on the largest board, where the parity effect is smallest, the swing between two near-peers is still 64 points.

This is the number that matters for players. “Elite beats Hard” is only true if you give Elite the first move. Force Elite to go second on 5×5, and a strong-enough Hard copy can take the game.

What we chose not to do

There are three standard mitigations for first-player imbalance in abstract games, and we considered all of them:

- Komi — give P2 a fixed score bonus. Works for Go because Go has decades of data on what the bonus should be. For us, the correct komi varies wildly by board size (roughly 0.5 points on 8×8, something like 8 points on 7×7 based on mean margins), and a single number can’t capture that.

- Pie rule — P1 plays the first move, then P2 chooses whether to swap sides. Elegant and board-size-agnostic. But it pushes decision-making onto the player at move one, which is not a great onboarding experience for a casual mobile game.

- Random assignment — flip a coin for who goes first. Doesn’t fix the imbalance, it averages over it. Over many games a player will be P1 and P2 roughly equally often, which is fair in expectation but not within a single match.

- Two-game match — each pair plays twice, once from each starting position, and the highest total score takes the overall match. This is the mitigation we plan to ship when online play lands: it neutralizes the parity effect per matchup without a magic-number komi or asking new players to reason about the pie rule on move one.

Today the single-player app doesn’t apply any rule-level mitigation — the stats above are exactly the tax in play. The more interesting open question — whether to also ship a board-size-dependent komi or a pie-rule toggle for competitive play — is one we’d want to answer with player data, not a priori.

What we’d want next

The analysis has obvious extensions. Computing the break-even komi per board size — the fixed point bonus that pushes P1’s win rate to 50% — would give us a concrete number per board to argue about. Running the same measurement against a wider roster of policies (including AlphaZero teacher checkpoints we didn’t ship) would tell us whether the 96.8% ceiling is really a property of the game or a property of our current Elite. And if we ever did ship a competitive online mode, the pie rule is cheap to implement and actually addresses the problem at its source.

Takeaway

A symmetric-looking game is not a fair game. We shipped five board sizes without measuring first-player advantage on any of them, on the assumption that a coin flip at match start would be enough. It isn’t — not on odd boards, not at Elite strength, and certainly not in a match between two peers where going second flips the win rate from 100% to 0%. If your game has two players, alternating moves, and no compensation rule, run the self-mirror experiment before you ship. The number might be bigger than you think.

The tournament heatmap post that flagged this question without quantifying it is at Tournament Heatmaps: Is the Difficulty Ladder Graduated?.